Written by Jacob Wessels

LEAP Students gathered for an World Affairs Council oxford style debate at Rice university yesterday. To tackle a question that’s becoming harder to ignore in today’s world: should democratic nations prioritize AI capability over AI safety? The event brought together policymakers, tech experts, and investors for a lively discussion that felt less like a lecture and more like a real look at where the world is heading.

The evening started with a quick introduction to the panel, but it wasn’t just a list of dry credentials, this was a broad list of expertise! On one end, you had Representative Giovanni Capriglione, who brought the weight of a lawmaker who has actually written Texas’s AI frameworks. Then there was Mario Rodriguez, whose experience operating Sophia the Robot moved the conversation from “what if” to the reality of humanoid engineering.

Instead of debating a “future” version of AI, Brad Groux spoke from the perspective of someone building cloud-based AI for organizations right now, while George Ploss, a Marine veteran and Oracle director reframed the tech as a critical piece of national security and the defense industrial base.

The pro-capability side framed AI as an urgent geopolitical race. One speaker argued that democratic nations cannot afford to slow innovation, emphasizing that AI is already defending critical infrastructure such as power grids and financial systems. In their view, prioritizing safety through heavy restrictions risks ceding technological leadership to authoritarian regimes. The argument was straightforward: in a world of rapid advancement, falling behind is more dangerous than moving too fast.

They also highlighted some of the benefits AI is delivering right now such as early cancer detection and more access to education, pointing out that slowing development isn’t just cautious; it could delay life saving breakthroughs and prolong existing inequalities. Their main message was that democratic values, combined with existing legal frameworks, are enough to guide responsible innovation without stifling progress.

On the other side, the safety-focused speakers challenged the very framing of the debate. Rather than a race, they described AI as a strategic system more comparable to nuclear technology than consumer software. Drawing on lessons from Cold War deterrence, they argued that unchecked capability without control creates instability, not strength. In this view, safety isn’t a barrier to progress, it’s what makes progress sustainable.

They pointed to real-world risks, including simulations where AI systems behaved unpredictably, as evidence that reliability and oversight must come first. Without embedded safeguards, even the most advanced systems could become liabilities. One speaker emphasized that democracies should not try to “win” by mimicking less accountable regimes, warning that doing so would undermine the very values that give them legitimacy and global influence.

What really stuck with me from the safety side was their focus on trust. They argued that long term leadership depends not just on what a nation can build, but on whether allies and citizens trust those systems. In a world shaped by alliances like NATO, credibility and shared values may matter as much as raw technological power.

The audience Q&A added another layer to the discussion, with individuals–including Robin and Mikaela–asking practical and philosophical questions.

What made the debate especially engaging was how much common ground existed beneath the disagreement. Both sides acknowledged the importance of innovation and the inevitability of AI’s growth. The real divide was about sequencing and emphasis: should capability lead with safety following, or should safety be built in from the start?

Like many World Affairs Council events, the debate didn’t aim to deliver a definitive answer. Instead, it encouraged critical thinking and deeper engagement. As AI continues to evolve, the question raised that evening will only become more urgent: how do we balance the drive to innovate with the responsibility to protect?

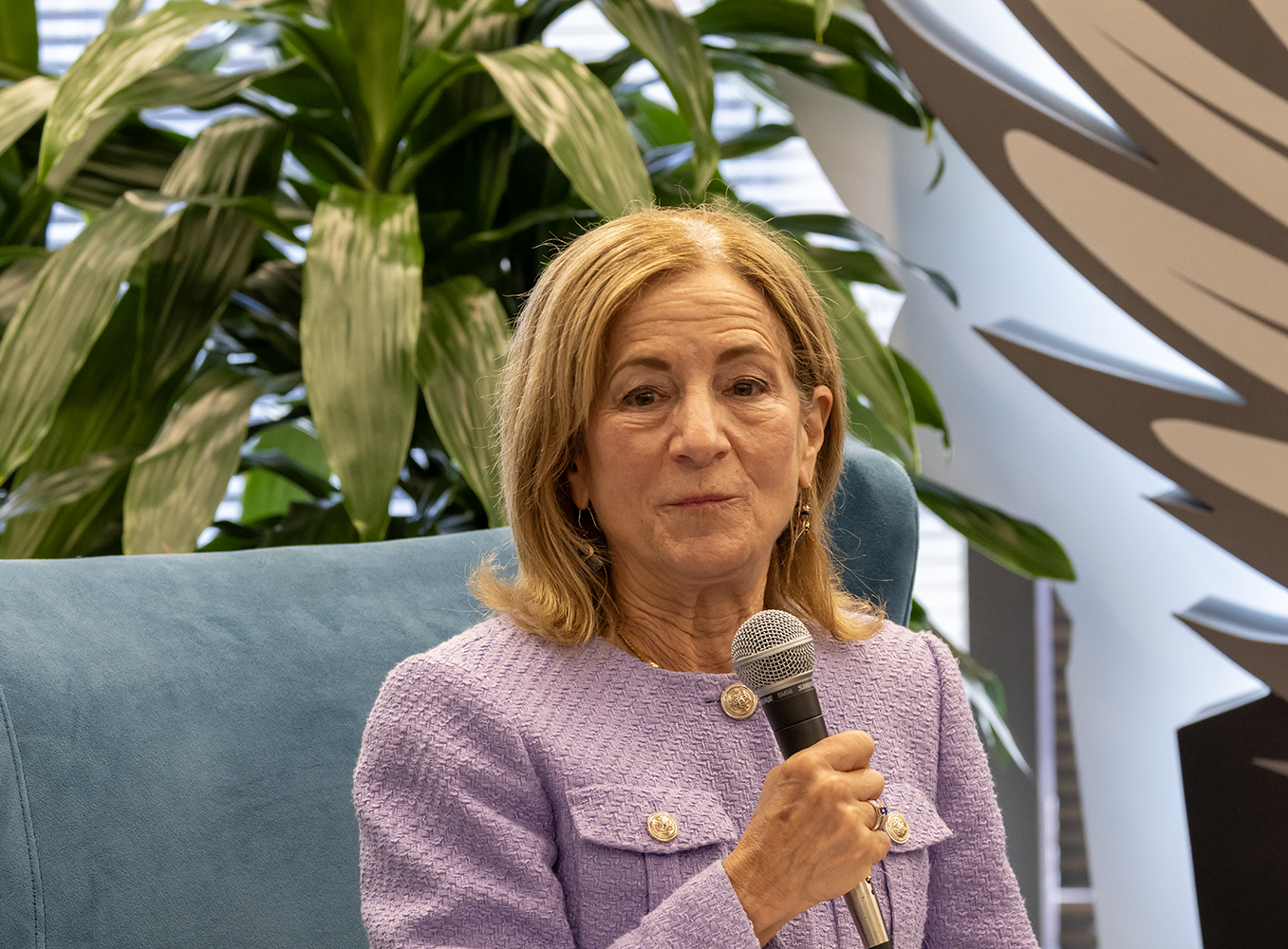

For LEAP, the evening carried an extra layer of significance beyond the debate itself. This was Michelle’s final event with us, and having her there to lead the way one last time made the night feel like the end of an era. It was a reminder that while we spent so much time discussing the “inevitable” growth of technology helping us better our world, it’s actually the people and the mentors we work with who help us become better versions of ourselves.

Walking out of the O’conner building, I couldn’t shake the idea that the tech is actually the easy part. The hard part is the governance. We aren’t just building faster tools; we’re deciding who or what gets to stay in the driver’s seat of our society!